this is not a blog

#1 - LLMs and artificial "intelligence"

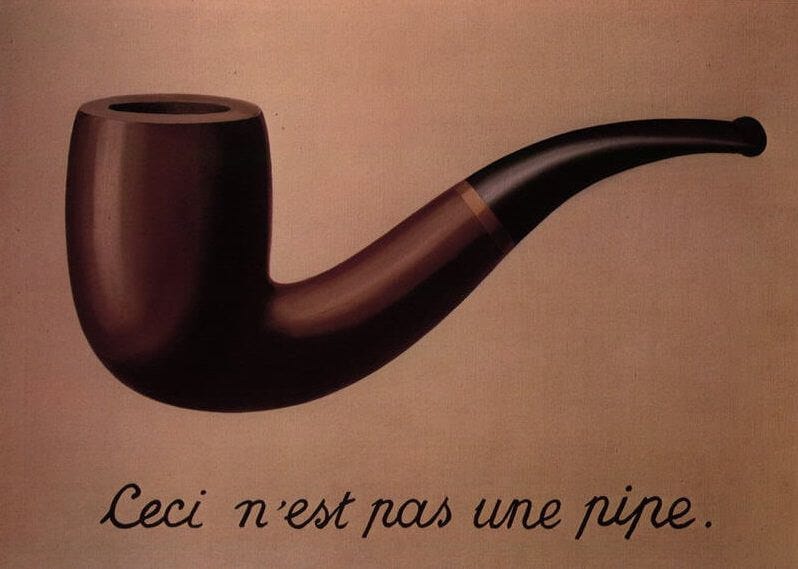

This is The Treachery of Images — a painting by René Magritte. Magritte’s a bit of a silly fella! He paints a pipe, and then writes “this is not a pipe” underneath. But by doing so, he asks an acerbic question that’s relevant to recent advancements in AI/ML: is an image of an object the same as the object itself?

Obviously, we have a good sense towards what Magritte thinks! Though for what it’s worth, Magritte didn’t have to deal with DALL-E 2 and Stable Diffusion chomping up his works and spitting out pictures of slightly distorted tophats with “this is not a tophat” written underneath. If that was the case, maybe he’d write “this is not a pipe // and by the way fuck you heathen robots stop stealing my art” (in French).

Joking aside, there’s been a lot of discourse (especially from those without prior AI/ML experience or knowledge) surrounding GPT-3 (ChatGPT, Bing AI). There have been concerns relating to academic integrity, loss of jobs, and even courtship… Generally speaking, people are worried about AGI (artificial general intelligence).

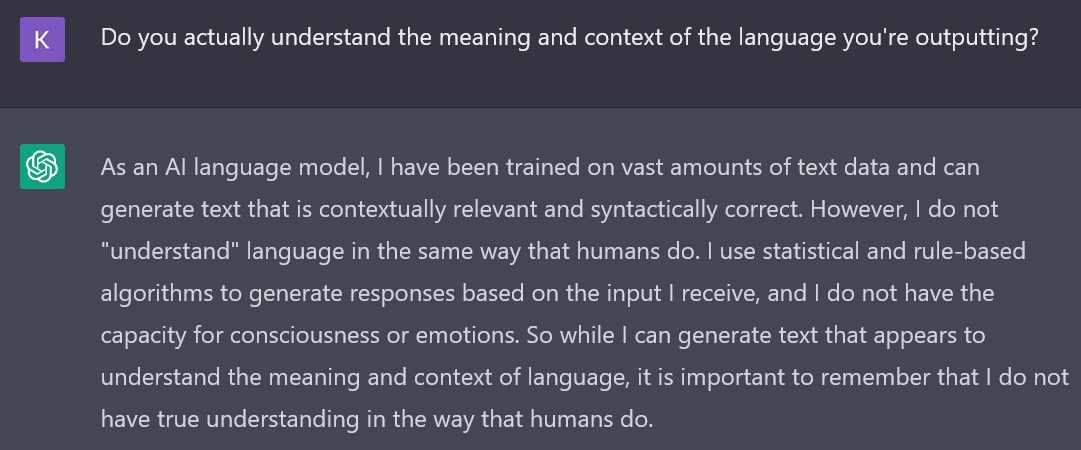

One of the key concerns about AGI is whether or not these systems will truly be able to understand the meaning and context of the language they are processing1 — I’m going to say no. Yann LeCun and Gary Marcus agree on this (which is pretty big seeing as the two have been certified AI beeflords). I asked ChatGPT as well:

This lines up with how ChatGPT was built. Stephen Wolfram’s blog breaks down the specifics of neural networks and ChatGPT exceptionally well. There’s something missing with regards to how large language models digest semantic grammar. GPT-3 has 175B parameters, nearly twice the number of neurons in a human brain. Of course, those neurons account for around 100T connections — but speculation is that GPT-4 will rival even that count in parameters. Even if GPT-4 has 100T parameters and can conceivably rizz up anyone on Hinge without fail, I don’t believe it’s AGI.

Ludwig Wittgenstein wrote about the concept “meaning as use”, positing that meaning in language is determined by its usage rather than its inherent properties (i.e. linguistic etymology). Under this framework, if a model is unable to understand context in language (with large language models essentially playing a giant game of word association via advanced statistics), how could it understand meaning? And if a model is unable to understand the meaning of its output, I would find it hard to consider it as AGI due to a lack of similarities with human cognition.

An argument can be made in that it doesn’t matter. Who cares if the model actually understands linguistic meaning if the output is adequate? There are use cases and examples where this is probably true. Take customer service for instance. You don’t want a chatbot to be too human-like (i.e. issues with the uncanny valley) — if it identifies key words and phrases in user messages and associates them with the appropriate responses, that works! But there’s no huge, society-shifting “wow” moment in that.

The most popular use cases for LLMs currently lie in rote, procedural work (e.g. document processing & analysis, non-nuanced language translation2, Canvas discussion assignments with 300 word count requirements) and human labor facilitation3. Stephen Wolfram brings up a great point related to this:

Human language is fundamentally imprecise, not least because it isn’t “tethered” to a specific computational implementation, and its meaning is basically defined just by a “social contract” between its users. But computational language, by its nature, has a certain fundamental precision—because in the end what it specifies can always be “unambiguously executed on a computer”.

In order to deal with use cases involving more layers of abstraction (i.e. the more “imprecise” parts of language), LLMs like GPT-3 are going to need to improve their ability to understand context in pursuit of greater output flexibility. Asking ChatGPT to do something like “write a loop in Python that iterates 100 times, printing the count each time” is straightforward. We’re already seeing major impact here — GitHub Copilot already accounts for an average of 46% of a developer’s code4. In spite of that, there's still so much room for improvement!

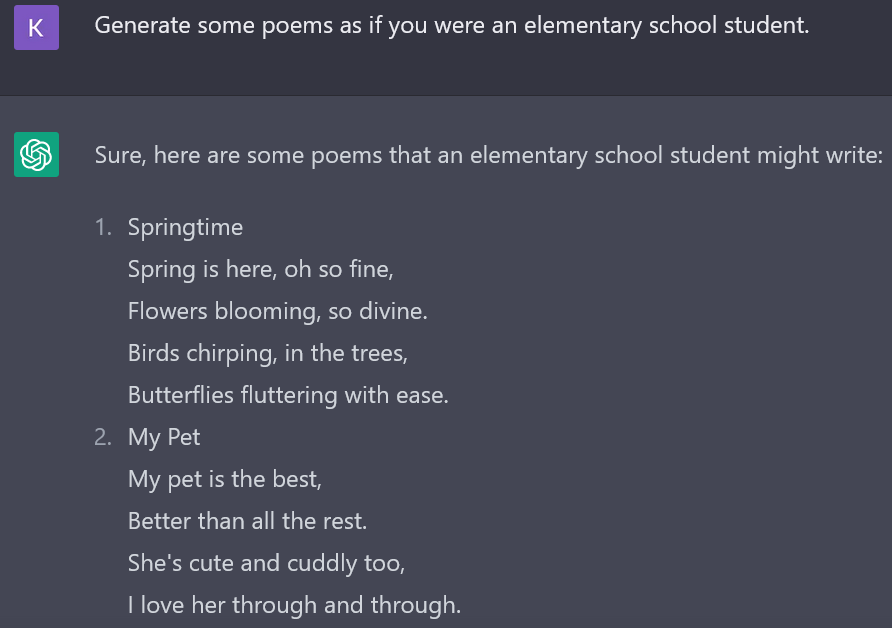

Let me give an example:

Two things here:

These are terrible poems. ChatGPT had the gall of pumping out eight of them. For the sake of brevity (and your sanity5) I’ve cut them.

ChatGPT is really underestimating elementary school students.

There’s this amazing post on Poetry Foundation where a graduate student taught poetry to fourth graders. The stuff they came up with is amazing. Here’s one (though you should definitely read them all):

“The life of my heart is crimson.”

Holy shitttttt.

In the article, Hannah Gamble writes about how middle school and high school students lose that creativity as they learn how “normal people talk”. In her examples, there’s an consistent reliance on clichés. We see that with ChatGPT too: “so divine”, “birds chirping in the trees”, “all the rest”, “through and through” — cliches & repeated phrasings ad nauseum.

And this makes sense! GPT-3 was trained on datasets like Common Crawl and WebText that scrape text from sources like Reddit. If you train a model on a bunch of text likely containing a plethora of clichés then ask it to play word association, what else can you expect?

I don’t mean to downplay the significance of ChatGPT. It’s undoubtedly a seminal point in AI/ML advancement, but let’s be realistic: it’s unlikely that models based on neural networks will reach true AGI before a financial cost, computing power, dataset size, or other bottleneck emerges. And at least from my perspective and personal heuristics, it’s hard to believe that a massive technological leap (machine learning → true artificial intelligence) will result from linear, brute force increase of model parameters.

Let’s return to the question we started with: is an image of an object the same as the object itself? Or in our case, is external perception of intelligence the same as intelligence itself? My answer here is “not yet”. And while we may reach AGI, whether it be through neural networks, causal inference, or some yet-still-unknown method/technique, for now we should remember:

This is not a pipe, this is not intelligence, this is not a blog.

People are really scared of Bing AI (Sydney)’s outputs here. They’ve even limited the number of replies she’s (this single word choice requires a whole other piece) able to give. But it’s worth noting that this isn’t the first time a Microsoft AI has gone ‘haywire’ — remember Tay?

I didn’t appreciate the nuance & difficulty of translation until I read Babel by R.F. Kuang. It’s historical fantasy fiction but language plays an immense role — would recommend!

According to VCs it's mandatory that we back hundreds of 'AI' copywriting tools.

This is probably an great example of how we overuse mean and underuse median. If GitHub has a bunch of small-brained people like me that heavily rely on Copilot, I’d bet the median wouldn’t be so eye-catchingly high.

It also composed an extremely strange couplet for a poem about Halloween: “Trick or treat, give me some candy, / Or I might get a little bit handy.” Excuse me? What is going on here? Who let ChatGPT cook?

Hey! I think it's really great that concerns about AI are becoming more mainstream. I still find myself in the behavioralist camp because I think "Chinese Room" style arguments draw a false dichotomy and that clearly modern AI are far more intelligent than, say, flatworms, and some have argued that they pass more self-awareness tests than dogs. Can you convince me that you are, as you claim, intelligent? How am I to know that this blog post wasn't written by an AI?